Ten days ago I started using four ultra low-cost ATOM-processor based tablets (running under Windows 10) as monitoring stations (or “remote probes”, as we call them in the context of PRTG). Each of them was monitoring about 100 sensors in my home lan, including wifi quality and temperature. Here are my experiences of the first 10 days:

Quick Summary

The best choice is the Chuwi Hi8 8.0″ Tablet PC (€78) closely followed by Odys Winpad X9 (€103).

Build-quality, displays and overall experience was impressive for such a low-cost hardware. It has the fastest processor and a brilliant display. The hands on experience is not as far away from an iPad as the price difference may make you think.

The two other contenders, TrekStor SurfTab wintron 7.0 (€65) and Teclast X80 Pro 8″ Tablet PC (€98) both ran into problems during the test.

Let’s look at the details:

Tablet Reliability

As a general observation all four tablets did a very reliable job over the first 10 days. After I set up the systems they all ran 24/7 without crashes, power problems or any other serious hickups. All probes reported an uptime of 100% over 7 days.

But there are differences:

- The wifi module of the Teclast is clearly inferior to the other three. The tablet could only connect and reliably hold the connection when I placed it in the same room as the access point. Everywhere else the connection was lost every few minutes. Which makes it pretty useless for monitoring in general and especially for wifi quality monitoring.

- Only the Odys and the Chuwi tablets provide useful wifi connection quality data, with the two others (TECLAST and Trekstore) you either measure 100% quality or 0%.

- The built-in temperature sensors seem to be placed very close to the cpu in all tablets and thus are pretty much useless for environmental monitoring: even when placed outside at freezing temperatures the tablets reported 30°C.

Tablet Performance

I used PRTG’s standardized cpu, memory and disk performance tests to compare the performance of the tablets:

| Tablet | CPU Test | Disk Test | Memory Test |

| Chuwi | 1,435 ms | 23,686 ms | 1,047 ms |

| TECLAST | 1,758 ms | 22,236 ms | 1,228 ms |

| TrekStore | 1,917 ms | 29,912 ms | 1,414 ms |

| ODYS | 1,742 ms | 26,008 ms | 1,201 ms |

The Chuwi Hi8 tablet, the second cheapest at €78, has the most powerful cpu (the only cpu over 2 Ghz in our test field) and runs the performance tests fastest, except for the memory test which the TECLAST wins probably due to better performing memory chips.

Monitoring Results

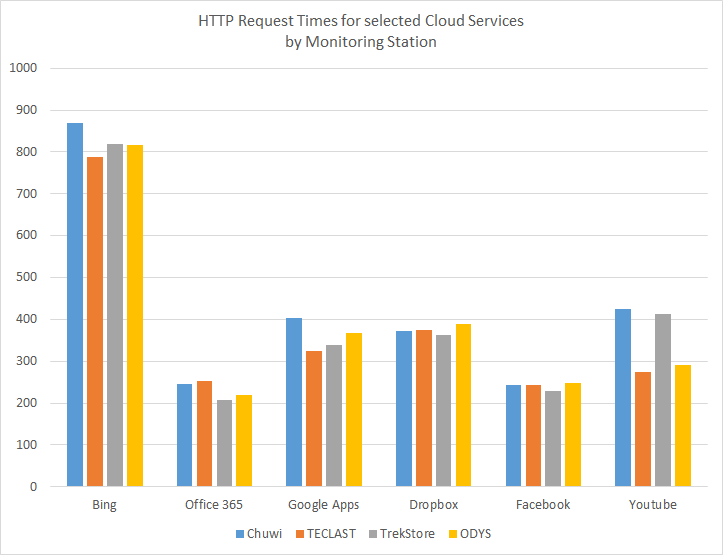

Using the Common Saas Services sensor I monitored the http request times for various cloud services on the Internet. Here are the averages over several days:

| Tablet | Office 365 | Bing | Google Apps | Dropbox | Youtube | |

| Chuwi | 246 | 868 | 404 | 372 | 243 | 425 |

| TECLAST | 254 | 788 | 324 | 374 | 244 | 275 |

| TrekStore | 207 | 818 | 338 | 362 | 229 | 412 |

| ODYS | 220 | 817 | 367 | 388 | 247 | 290 |

Except for Youtube, which sees some variation, all request times are very similar, all tablets deliver usable data.

2 thoughts on “Which low-cost Windows 10 tablet is the best low-budget remote probe for PRTG Network Monitor?”

Comments are closed.